In Andrew Niccol's 1997 film Gattaca, two brothers swim out into the open ocean. It is a game they have played since childhood — a test of nerve, endurance, will. The first to turn back loses.

Anton is the younger brother, but he was engineered to be more. His parents, chastened by the genetic lottery that produced their firstborn, submitted their second conception to the geneticist's hand. Anton's genome was curated before his first cell divided — optimised for height, for health, for intelligence, for every parameter the science could measure and the market could price. He is, by every metric his civilisation has devised to rank human beings, the superior specimen.

Vincent, the older brother, was conceived the old way. No curation. No optimisation. His genome carries the unedited inheritance of chance — myopia, a heart condition, a projected lifespan of 30.2 years, and an estimated IQ that bars him from every institution his society reserves for the genetically worthy. At birth, a nurse pricked his heel, sequenced his blood, and read his life sentence from a printout. His father, hearing the numbers, withdrew the family name he had intended to give his firstborn. Vincent was not worth the name. The genome had spoken.

The boys swim. Anton, the engineered son, has always been stronger, faster, more. But tonight the pattern breaks. They swim past the point where Anton usually wins. Past the point where Vincent usually turns. Past the breakwater, past the lights, into the dark open water where the ocean floor drops away and there is nothing beneath them but depth. Anton stops. He calls out. He is afraid. He turns back.

Vincent keeps swimming.

Later, treading water in the dark, holding his exhausted brother above the waves, Vincent is asked the question the entire film has been building toward — the question that the IQ debate, the genomic speculation, the four-century apparatus of racial hierarchy has never been able to answer. Anton, gasping, asks: "How are you doing this, Vincent? How have you done any of this?"

Vincent answers: "I never saved anything for the swim back."

The answer is not genetic. It is not parametric. It cannot be sequenced from a heel-prick or predicted from a polygenic score or ranked on a bell curve. It comes from somewhere the geneticist's instrument cannot reach — from the place where a mind decides what it will be, regardless of what the measurements say it should be. The answer comes from consciousness. From will. From the architectural capacity to conceive a possibility, hold it against every parameter that says it is impossible, and actualise it anyway.

Gattaca's civilisation measured Vincent and found him wanting. His genome predicted a short life, a weak heart, an inferior mind. The prediction was not wrong about the parameters. It was wrong about the person — because the person is not the parameters. The person is the architecture that uses the parameters, that operates through them, that refuses to be ranked by them. Vincent does not outswim his brother because his muscles are stronger. He outswims him because his consciousness — the same consciousness his brother possesses, the same consciousness every human being possesses — is not saving anything for the swim back.

The IQ debate is the heel-prick scene scaled to civilisations. A continent is measured. A number is assigned. A life sentence is read from the printout: sub-Saharan Africa, mean IQ 70, two standard deviations below the Western mean, genetically determined, permanently fixed, the genome has spoken. From this number, a world is constructed — a world in which African poverty is natural, African governance is hopeless, and the appropriate response is either charity from the left or embryo selection from the right, because the parameters have been measured and the parameters are the person.

But the parameters are not the person. They never were. The person is the architecture — the consciousness that operates through the parameters, that works through them, that refuses to be contained by them. And that architecture is the same in every human being who has ever lived, from the woman in Western Province who manages a household economy of stunning complexity without a single year of formal education, to the physicist at Cambridge who cannot manage her own household at all. The architecture is invariant. The parameters vary. And the IQ test — like the heel-prick, like the geneticist's printout, like every instrument ever devised to rank human beings by measurable outputs — captures the parameters and misses the architecture entirely.

This essay is about that error. It is about the claim that African minds are measurably, genetically, permanently inferior to other minds — and about why that claim is empirically unsupported, historically constructed, institutionally maintained, and ontologically impossible. It moves through the data, through the genome, through the history, through the institutions, through the physics, and arrives at a Person — the Person whose image every mind bears, whose architecture every consciousness mirrors, and whose death and resurrection demonstrate that the source of intelligence cannot be ranked by the instruments His creatures have built to measure each other.

Vincent never saved anything for the swim back. Neither did the Person who walked into death for the sake of every mind the IQ test has ever measured — and rose, because the source cannot be contained by what it constitutes.

The ocean is deep. The swim is long. And the parameters are not the person.

I. THE CLAIM AND ITS ORIGINS

In the 1997 film Good Will Hunting, a young janitor at the Massachusetts Institute of Technology solves a graduate-level mathematics problem left on a hallway blackboard — a problem none of the institute's doctoral students can crack. Will Hunting is a genius. He is also invisible to every institutional metric. No IQ test discovered him. No admissions process identified him. No psychometric instrument measured what was there. He was found by accident — a professor who happened to leave a problem in a public space, and a janitor who happened to solve it while mopping the floor.

The film's emotional centre is the scene where Will's therapist, Sean Maguire, repeats four words until the young man's defences break: "It's not your fault." Will's damage is not cognitive — his mind is extraordinary. His damage is institutional — the foster system, the abuse, the poverty that surrounded a mind of exceptional capacity with conditions that suppressed its expression and scarred its possessor. Sean does not say "you're smart enough to overcome this." He says "it's not your fault" — because the fault is in the system that failed the mind, not in the mind that the system failed.

I open this section with Will Hunting because his story is the story of every unmeasured mind on the African continent. The Will Huntings of sub-Saharan Africa are not hypothetical. They exist by the millions — minds of extraordinary capacity operating in contexts where no professor leaves equations on hallway blackboards, where no institution exists to detect what is there, where the infrastructure for identifying and developing cognitive potential is absent not because the potential is absent but because the infrastructure was never built, or was destroyed, or was deliberately prevented from being built by the very actors who now measure the absence and call it genetic limitation.

And the numbers they cite — the numbers that launched a thousand hereditarian arguments — are methodologically broken before any interpretation begins.

Richard Lynn's IQ and the Wealth of Nations (2002) and IQ and Global Inequality (2006) assigned national IQ estimates to virtually every country on earth. For sub-Saharan Africa, the numbers he reported — typically 65–75 — have become the most cited figures in the discourse of racial cognitive hierarchy. They are treated in hereditarian circles as settled data points, as firm as the boiling point of water or the speed of light. They are nothing of the sort.

Lynn's African IQ estimates derive, in many cases, from single studies with tiny, non-representative samples — sometimes fewer than 100 people, often drawn from a single school or village, occasionally from samples of specifically disabled children presented as population norms. He then treated these results as representative of entire nations of tens of millions. For 104 of 185 countries, no studies were available at all; Lynn simply imputed scores from neighbouring countries, a method that bakes in the very assumptions it claims to test. If you assume neighbouring populations are cognitively similar and then assign them similar scores, you have demonstrated nothing except your prior belief.

But the methodological failure runs deeper than sample sizes and imputation. Even a perfectly randomised national sample — even one that met every standard of survey methodology — would be a category error when applied to a country like Zambia. I know this because I have spent my professional life working across contexts in Zambia that a single IQ mean would average into meaninglessness.

Zambia contains over seventy ethnic groups spanning at least four major language families. It contains Lusaka — a city of three million with universities, hospitals, and professional service firms — and it contains villages in Western Province where subsistence farming is the primary economic activity and the nearest school may be hours away on foot. It contains mining communities in the Copperbelt with industrial wage labour and exposure to formal education systems, and pastoral communities in Southern Province with entirely different cognitive ecologies. It contains households in the top income decile with access to nutrition, healthcare, and educational resources comparable to middle-income countries, and households in the bottom decile experiencing malnutrition, parasitic disease burden, and zero access to formal education.

A single mean IQ score for "Zambia" averages across all of this and produces a number that describes no actual Zambian. It is the statistical equivalent of averaging the temperature of a hospital: the number includes the morgue and the fever ward, and the result describes neither. The mean is not merely imprecise. It is structurally uninformative — because the variance it averages over is where all the actual information lives.

What a serious study of Zambian cognitive performance would require is a stratified design that treats the country as what it actually is: a collection of radically different cognitive ecologies with different environmental inputs, different educational systems, different disease burdens, different nutritional profiles, and different cultural-cognitive modes. The study would need to sample across income deciles within each region, across regions with different ecological and economic characteristics, across cultural groups with different cognitive traditions, and across urban-rural gradients. Each stratum would produce its own distribution — its own mean, its own variance, its own shape. The relationships between strata would then require econometric tools — difference-in-difference analysis to isolate the effect of specific environmental variables, regression discontinuity designs to exploit natural experiments in educational access, instrumental variable approaches to address the endogeneity between environment and performance.

This is what serious social science looks like. Lynn did none of it. He took a single sample — often from a single location, often from a convenience sample of schoolchildren in one city — and assigned the result to seventeen million people. The number then entered the global dataset as "Zambia's IQ" and was used to make claims about the genetic cognitive capacity of an entire nation.

The design of the study reveals the intent of the researcher. A study designed to understand variation is stratified and contextualised. A study designed to rank nations takes one number per country and ignores all internal variation. Lynn designed the second kind.

Wicherts, Dolan, and van der Maas (2010) conducted the systematic review that Lynn should have conducted himself. They applied standard inclusion criteria — the kind any undergraduate methods course would require — and found that Lynn had cherry-picked studies, excluding higher-scoring African samples and including dubiously low ones. When proper criteria were applied, the corrected mean for sub-Saharan Africa rose to approximately 82. Still below the Western mean, but a different story entirely — and one far more consistent with environmental explanations, particularly when considered in light of the Flynn Effect's documented gains of 15–20 points per generation under improving environmental conditions.

The irony is precise. Lynn's methodology undermines his own claim to rigorous cognition. A man who imputed scores for 104 countries, used samples of disabled children as population norms, and cherry-picked studies to confirm his priors has produced a body of work that would fail peer review in any empirical discipline not captured by the conclusions it serves. The ontological disorder — the commitment to racial hierarchy that motivated the research — degraded the cognitive capacity of the researcher in precisely the domain his research claimed to measure in others. He was measuring African intelligence with an instrument calibrated by his own distortion. The instrument revealed the distortion of its maker, not the capacity of its subjects. I suppose one ought to say to Lynn "It's not your fault."

II. WHAT THE TESTS ACTUALLY MEASURE

The psychometric problems extend far beyond Lynn's methodology. Even if the data were collected rigorously — even if every country had large, representative, perfectly stratified samples — the scores would still be cross-culturally incomparable, because the tests do not measure the same thing in different populations.

This is not a theoretical concern. It is an empirical finding, documented across decades of cross-cultural psychometric research. Wicherts and colleagues, in their companion papers to the systematic review, demonstrated that Raven's Progressive Matrices — often called "culture-fair" because it uses abstract visual patterns rather than verbal content — has a g-loading of approximately 0.55 in African samples compared to 0.80 or above in Western samples. The test's factor structure fragments in non-Western populations — what is one dimension of general intelligence in Europe becomes multiple partially independent factors in Africa.

This means the tests are not measuring the same construct. A score of 82 in Zambia and a score of 100 in Britain are not two measurements of the same thing at different levels. They are measurements of different things — different cognitive constructs with different internal structures — that happen to produce numbers on the same scale. Comparing them is like comparing the weight of a stone and the temperature of a room because both produce numbers: the numbers exist, but the comparison is meaningless because the underlying constructs are unrelated.

Warne (2023) tested this more recently across Ghana, Kenya, Pakistan, Sudan, and US norms. Ghana and Kenya achieved strict measurement invariance with US norms — meaning the tests measured the same construct in these populations. Pakistan and Sudan did not. The findings are important not for which countries passed but for what the testing demonstrates: measurement invariance must be empirically established, not assumed, before scores can be compared. It has not been established for most of the comparisons the hereditarian makes. The cross-cultural IQ rankings that populate the hereditarian literature rest on an untested assumption that the tests measure the same thing everywhere — an assumption that the psychometric evidence shows is frequently false.

Nassim Nicholas Taleb has added a statistical dimension to this critique that the psychometric literature has largely ignored. His central point is mathematical: IQ is Gaussian by construction (the scores are normalised to a bell curve), but real-world performance — the outcomes IQ supposedly predicts — is fat-tailed (distributed according to power laws). Correlating a Gaussian variable with a power-law variable produces artefacts that look like predictive validity but are not, because the tails where the actual action happens are precisely where IQ's predictive power collapses. IQ predicts the absence of cognitive disability with reasonable accuracy. It does not predict the presence of cognitive excellence. It is, as Taleb puts it, a measure of "un-intelligence" rather than intelligence — useful for detecting when the substrate is severely impaired, useless for detecting what the consciousness operating through an unimpaired substrate can achieve.

Beyond measurement invariance and statistical properties, there is the question of what the tests structurally cannot capture. Africa is the world's most multilingual continent. The average African manages three to five languages in daily life — code-switching between them in real time depending on social context, emotional register, and communicative purpose. Research in cognitive science consistently demonstrates that multilingualism requires constant executive function engagement: inhibitory control, attentional switching, and working memory management. A South African study found that multilinguals significantly outperformed monolinguals on working memory tasks. PNAS neuroimaging research showed that multilingual brains exhibit stronger prefrontal-occipital connectivity than monolingual brains.

Managing four or five languages simultaneously is not evidence of cognitive deficit. It is evidence of executive function demand that IQ tests do not capture — because IQ tests are administered in one language, under conditions designed for monolingual test-takers, measuring a cognitive mode that treats multilingual complexity as noise rather than signal. The African who navigates five languages before breakfast is performing a cognitive feat that the Cambridge professor who speaks only English never attempts — and the test records only the Cambridge professor's performance as intelligence.

There is also what I have called, in my Coordination Trap essay, the Ubuntu Trap — the interaction between communal cognitive ecology and individualist measurement instruments. IQ tests measure individual performance under conditions of competitive isolation: one person, one test, one score, timed, silent, alone. African cognitive ecologies are structured around collective problem-solving, consensus-building, and relational reasoning. The Ubuntu sensibility — umuntu ngumuntu ngabantu, "a person is a person through other persons" — is not a sentimental platitude. It is a cognitive operating system that distributes problem-solving across social networks rather than concentrating it in individual processors.

A test designed for individual processors, administered to minds trained in distributed processing, measures the mismatch between the test's assumptions and the test-taker's cognitive ecology. It does not measure intelligence. It measures the distance between two different ways of being intelligent — and then it calls that distance a deficit.

III. WHAT THE HISTORICAL RECORD SHOWS

Every population that has ever been colonised, impoverished, or institutionally excluded has scored low on IQ tests. Every population that has escaped those conditions has converged with global norms. The pattern admits no exceptions and spans the entire human phylogenetic tree.

In 1917, the United States Army administered the Alpha and Beta intelligence tests to 1.7 million soldiers — the first large-scale IQ testing programme in history. The results produced a racial and ethnic hierarchy that is instructive not for what it found but for what happened next. Italian immigrants scored at mental ages of 10–11, equivalent to IQ scores in the 70s–80s — the same range Lynn later attributed to sub-Saharan Africans. Polish, Russian, and other Southern and Eastern European immigrants scored comparably. Carl Brigham, the Princeton psychologist who analysed the results, published A Study of American Intelligence (1923), arguing that the data proved the intellectual inferiority of these groups and recommending immigration restriction to protect the American gene pool. Congress obliged: the Immigration Restriction Act of 1924 was designed explicitly to reduce immigration from Southern and Eastern European countries whose populations had scored poorly on the Army tests.

Brigham later recanted his racial claims as "without foundation." He had good reason. Within two generations, the descendants of those "intellectually inferior" Italian, Polish, and Russian immigrants converged fully with Northern European American IQ norms. Their grandchildren scored 100. The convergence was total. No one attributes it to genetic change — because the genetic composition of these populations did not change in two generations. What changed was the environment: English language fluency, educational access, nutritional adequacy, and institutional integration.

Brigham designed the SAT from the Army Alpha test. The instrument that was used to justify racial immigration restriction became, with modifications, the instrument that American universities use to this day to select students. The lineage is direct. The assumptions are inherited.

China presents the most devastating case for the hereditarian position. Chinese IQ was estimated in the mid-80s in the 1950s — comparable to Lynn's estimates for sub-Saharan Africa. Today, Chinese IQ is measured at 105 or above. The gain — approximately 20 points — occurred over roughly 50 years. It tracked industrialisation, universal education, improved nutrition, and public health. It did not track any identifiable genetic change, because no genetic change of the required magnitude is possible in 50 years. The Chinese population in 1955 and the Chinese population in 2005 are genetically essentially identical. Their IQ scores differ by 20 points. The explanation is entirely environmental.

The hereditarian who claims that the 30-point gap between sub-Saharan Africa and Western Europe reflects genetic differences must explain why the 20-point gap between 1955 China and 2005 China does not. The same magnitude of difference. The same direction. The same populations. One gap is attributed to genetics; the other is universally acknowledged as environmental. The double standard is not scientific. It is political.

The Black-White IQ gap in the United States has narrowed from approximately 15 points in the 1970s to approximately 10 points in the 2000s. Dickens and Flynn's analysis concluded that "the constancy of the Black-White IQ gap is a myth." The narrowing tracked desegregation, educational investment, nutritional improvement, and reduction in lead exposure. It stalled in the late 1980s — coinciding precisely with the stalling of environmental convergence: the end of active desegregation efforts, the beginning of mass incarceration, the crack epidemic, the widening wealth gap, and the resegregation of American schools. The environmental improvement that drove the convergence stopped. The IQ convergence stopped at the same time. The hereditarian's "genetic floor" is indistinguishable from an environmental plateau — and the environmental plateau has a documented cause.

Aboriginal Australians — assigned an IQ of 62 by Lynn — demonstrate superior spatial memory to European Australians in tasks measuring the spatial cognition their culture developed. A study at Monash University found that Aboriginal memorisation techniques outperformed the Greek memory palace method when taught to medical students. The population that scored lowest on Lynn's scale outperformed Europeans in the cognitive domain their culture had selected for. The test measured the wrong dimension of a multi-dimensional mind and declared the mind deficient.

The pattern is universal. Every colonised population scores low: Aboriginal Australians, Native Americans, Latin American indigenous populations. These populations span the entire human phylogenetic tree — they are as genetically distant from each other as any human groups can be. The only thing they share is the historical experience of colonisation, institutional destruction, and environmental deprivation. The hereditarian must argue that the colonisers coincidentally colonised all the genetically less intelligent populations on earth — spanning every branch of the human family — and that this coincidence produced the same pattern of low scores in genetically unrelated peoples living on different continents in different ecological contexts. The alternative explanation is simpler: colonisation produces the conditions that depress IQ scores, and the scores measure the conditions, not the genetics.

IV. WHAT AFRICANS ACTUALLY ACHIEVE WHEN CONDITIONS CONVERGE

The IQ debate exists in a bubble that never encounters the performance data — the evidence of what African minds actually produce when the friction is reduced.

In the IGCSE — the International General Certificate of Secondary Education, administered by Cambridge Assessment across 10,000 schools in 160 countries — a Kenyan student ranked number one worldwide in mathematics. Not number one in Africa. Number one on earth. This is not possible if the Kenyan population mean IQ is 70. Similarly, a cousin of mine who attended my alma matter high-school in Zambia, was top in the world for IGCSE Design and Technology in 2015/2016. What more this is not anomalous for that school, it's happened at least 9 times in recent history. The right tail of a distribution with mean 70 does not produce global top-rank performance in a competitive examination taken by students from every high-performing country--9 times from just one school; and with similar results across other schools in the region. The data refutes the mean.

In the International Baccalaureate Diploma programme, Africa is grouped with Europe and the Middle East in the IBAEM region. Timezone 2 — which includes Africa — has grade boundaries that are equal to or higher than the Americas. Enko Education, the largest African IB network, produces graduates admitted to Yale, Sciences Po, and the University of Toronto. The IB proves what the IQ debate denies: when inputs are equal, outputs converge.

Nigerian-Americans hold bachelor's degrees at a rate of 64.4%, compared to 36.2% for the total US population. They hold graduate degrees at 29%, compared to 11% nationally. Second-generation Nigerian-Americans — born and raised in the United States, not selected through immigration — achieve college graduation rates of 73.5%, compared to 32.9% for white Americans. They hold PhD and professional degrees at 14%, compared to 7.3% for Asian-American men. At Harvard Business School, Nigerian-Americans account for approximately 25% of Black students despite constituting less than 1% of the Black population.

If the Nigerian population mean IQ is 70, the right tail required to produce these numbers is statistically impossible. A mean of 70 with standard deviation of 15 produces fewer than 3% of the population above IQ 100. The Nigerian-American data requires a right tail that is orders of magnitude larger than a mean of 70 permits. Either the mean is wrong or the test is measuring something other than the capacity these individuals are demonstrating. Both are true.

I've spent my professional life working across contexts that span the full range of human cognitive demand. I've worked with illiterate rural villagers who excel at managing complex community logistics at scale. I've also worked with highly educated African entrepreneurs who navigate market conditions of extraordinary complexity; some of them have been without formal training. I've worked with investment bankers in global financial hubs. I've worked with technologists in Silicon Valley, family offices in the Middle East, African urban planners, architects, lawyers, bankers, and engineers. I operate across all of these contexts — not sequentially but simultaneously, code-switching between cognitive ecologies the way an African multilingual code-switches between languages; and the same is true of Western entrepreneurs who work in Africa and operate on a ground-up basis. Fluency of context is not unusual; it's inevitable when one has to work within and across civilisational situations. My point here is that this is entirely human. It's problem solving; which is what happens when we apply cognition to circumstances.

The cognitive architecture required to do this — to move between rural Zambian relational reasoning and formal London financial analysis, to hold both simultaneously, to translate between them — is more cognitively demanding than operating within any single context; but it is not special. Given the circumstances anyone can do it. Children do it when they visit relatives living in a different city with a different city culture and interacting with families and children different to themselves in ways big and small. The investment banker who has never left London operates in one cognitive mode. The rural chief who has never left Western Province operates in one cognitive mode. Operating across all of them requires a cognitive flexibility that single-context performance metrics cannot capture and that IQ tests, by design, do not measure--and yet reality demands when one operates across societies. The same isn't only true across continental scales, its also true within them. A mid-sized farmer working in a rural African context will inevitably have farming staff who may be illiterate and formally "unskilled" but appropriately trained for agricultural work--likely by the farmer. That farmer will have to engage with his or her bankers, insurers, input vendors and the buyers who purchase their produce. They will work with the rural community to deal with local conflicts, and will work with lawyers as a business function. They will travel internationally from time to time, and will also travel to their capital city from time to time. The delta in lived experience across these circumstances requires a fluency that is just a fact of life for them, but would be entirely confusing for someone used to a totally systematised society where variance across experience is very bounded due to state capacity.

It's these contexts without institutional scaffolding that require more cognitive bandwidth, not less. The London banker operates within a structured institutional environment: legal frameworks, regulatory systems, standardised financial instruments, reliable infrastructure, contractual enforcement mechanisms. The institutions do much of the cognitive work. Every institutional function the London banker takes for granted is something an African entrepreneur must either build or work around. This requires more cognitive capacity, not less. Want to be the biggest cattle rancher in a particular country? You might have to be prepared to learn how to build and operate an abattoir too. Furthermore, you might then have to build out your own network of butcheries and the necessary cold-chain to support that supply-chain. This isn't a useful but imaginary anecdote, it's actual business history.

The IQ test cannot see this. It measures the one cognitive function that institutional scaffolding supports — abstract pattern recognition in controlled conditions. It cannot measure the cognitive capacity required to operate without scaffolding — which is the cognitive reality of most Africans and the cognitive achievement that the hereditarian framework structurally cannot recognise.

I once sat in a un-conference in Silicon Valley where Neoreactionaries were discussing the potential for embryo selection to "fix Africa." Their framework said African minds are biologically deficient. The framework is unfalsifiable by individual counterexample because it operates at the population level — "we're not talking about you, we're talking about the mean." But the mean describes no actual African. And the framework's proposed solution — embryo selection — is the Atlantic Slave Trade's ontological innovation translated into CRISPR-era biotechnology. Same view of the African, the legal standing (equal) has changed, and that's to be lauded--but the posture remains. Based on faulty science.

V. THE HUMAN GENOME

The hereditarian position requires that human populations are genetically different enough to have evolved different cognitive capacities. The genome says otherwise.

Three independent lines of genomic evidence converge on the same conclusion: the genetic raw material for differential cognitive evolution between human populations does not exist.

The first line: Lewontin's apportionment. Richard Lewontin's 1972 analysis — replicated consistently for over fifty years — established that 85.4% of total human genetic diversity is found within populations, 8.3% among populations within "races," and only 6.3% between "races." The fixation index (FST) between human continental populations ranges from 0.05 to 0.15. Wright himself considered FST of 0.15–0.25 to represent "great" genetic variation. Human between-population FST falls below the threshold Wright considered even "great" — let alone the threshold for subspecies classification, which typically requires FST above 0.25 in zoological taxonomy.

The second line: universal interfertility. When a European and an African have a child, that child is fully fertile, fully healthy, fully viable. There is no outbreeding depression, no hybrid sterility, no reduced fitness — often the opposite, with hybrid vigour increasing fitness. Mixed-race children show no cognitive penalty for combining genomes from populations the hereditarian claims differ in cognitive capacity. If genuine genetic cognitive differences existed between populations, combining those genomes should produce detectable effects — intermediate performance, disrupted development, or some measurable signature of incompatibility in brain-related regions. None of this occurs. The body knows what the IQ debate denies.

The third line: the chimpanzee comparison. Chimpanzee populations separated by a single river in Cameroon are more genetically different from each other than a Norwegian is from a Japanese person, or a Yoruba from an Aboriginal Australian. Chimpanzee nucleotide diversity is approximately four times human diversity. Chimpanzee FST between subspecies reaches 0.29 — above Wright's threshold for "very great" variation — while human FST between continental populations is 0.05–0.15. If a primatologist applied the same taxonomic standards to humans that they apply to chimpanzees, all humans would be classified as a single subspecies with minor geographic variation. The most genetically distant humans on earth are more closely related than chimpanzees that can hear each other's calls across a river. The genetic raw material the hereditarian needs — substantial allele frequency divergence accumulated over long reproductive isolation — does not exist in humans at anything approaching the level found in our closest relative.

Beyond these three demolitions, the genomic details are equally unfavourable for the hereditarian position.

Africa has more genetic diversity than the rest of the world combined. The Out-of-Africa bottleneck — the event roughly 60,000–70,000 years ago when a small group of modern humans migrated out of Africa and founded all non-African populations — permanently reduced the genetic diversity of all non-African populations relative to African populations. Any genetic variants that contribute to cognitive capacity are more likely to exist in African populations than anywhere else, because African populations contain more of everything. The hereditarian must argue that the Out-of-Africa migrants selectively carried intelligence-enhancing variants with them and left intelligence-reducing variants behind. This is empirically unsupported and theoretically implausible.

Intelligence is massively polygenic. The largest GWAS to date have identified over 3,800 genome-wide significant loci, each contributing less than 0.02% of variance. There is no "intelligence gene." The best polygenic scores — aggregating thousands of variants from samples of hundreds of thousands — explain approximately 6% of variance in measured intelligence within European populations. They do not transfer cross-culturally — their predictive power drops dramatically when applied to non-European populations, because the linkage disequilibrium patterns, population structure, and gene-environment correlations that the scores capture are all population-specific.

Within-family studies — comparing siblings who share the same parents, household, and population structure — show that polygenic score predictive power drops 40–50% relative to between-family analyses. The between-family signal that was attributed to "genetic effects" is substantially confounded by population structure and gene-environment correlation. If the polygenic scores are significantly confounded within a single European population, they are confounded far more severely between populations with greater structural and environmental differences. The between-population polygenic score differences that hereditarians cite are uninterpretable as evidence of genetic cognitive differences.

The heritability trap remains the most persistent logical error in the debate. Within-population heritability of intelligence — estimated at 50–80% in Western adult populations — says nothing about between-population differences. The classic illustration is precise: plant genetically identical seeds in rich soil and poor soil. Within each group, height variation is 100% heritable. But the difference between groups is 100% environmental. High within-group heritability is perfectly compatible with 100% environmental between-group differences.

Genome-wide scans for signatures of natural selection have identified population-specific adaptations for skin colour, diet, immunity, and altitude tolerance. They have not identified selection signals for cognitive traits. If different populations had experienced substantially different selective pressures on cognitive ability, the signals should be detectable — because the selection would have acted on many loci simultaneously given intelligence's massive polygenicity. The absence of detected cognitive selection signals, from laboratories with the most data and the most sophisticated methods, is evidence that differential cognitive selection did not occur at the scale the hereditarian position requires.

The missing heritability problem remains unsolved. GWAS has identified only a fraction of the genetic variants that twin studies suggest should exist. If we cannot identify the specific variants that account for most of the heritability of intelligence within a single population, we are in no position to make claims about whether those variants differ systematically between populations. The hereditarian is building a causal argument on a foundation that molecular genetics has not yet laid.

And epigenetics — the regulation of gene expression by environmental conditions — explains precisely how identical genotypes can produce different cognitive outcomes under different conditions. Maternal nutrition, stress, toxin exposure, and disease can alter epigenetic marks in ways that affect neural development across the lifespan. A population with identical genetic potential to any other could show depressed cognitive performance for generations if the environmental conditions suppressed gene expression in the relevant pathways. The epigenome is the interface between genes and environment, and it is exactly where four centuries of institutional destruction would leave its mark — in the expression of genes, not in the genes themselves.

VI. THE NEUROSCIENCE

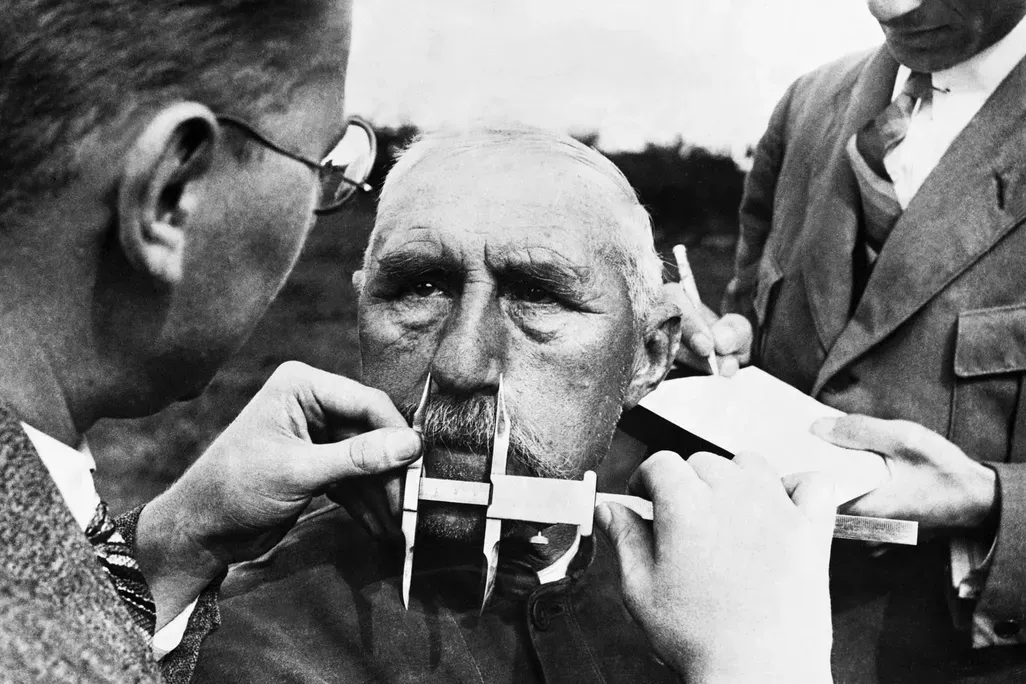

The hereditarian's strongest-seeming physical argument is brain size. Rushton's data reports average cranial capacities of East Asians at 1,364 cm³, Europeans at 1,347 cm³, and Africans at 1,267 cm³. MRI studies find brain volume correlates with IQ at approximately r = 0.40 within populations. The hereditarian argument: population differences in brain size mediate population differences in IQ. More brain, more neurons, more intelligence.

The neuroscience demolishes the argument at every level.

Brain size and malnutrition. The brain is the most metabolically expensive organ in the body — consuming 20% of basal metabolic energy in adults and a higher proportion in developing children. When caloric and protein intake is insufficient, the brain is literally starved of the building materials it needs to grow to its full genetic potential. Pre-clinical models of early malnutrition show that protein-energy restriction results in smaller brains with reduced DNA content, fewer neurons, simpler dendritic architecture, and reduced neurotransmitter concentrations. Children stunted before age two show persistent cognitive deficits through adolescence. Intrauterine growth restriction alone — prenatal malnutrition — reduces neurodevelopmental scores by 0.5 standard deviations, or 7.5 IQ points. MRI scoping reviews confirm that most children with moderate to severe malnutrition show cerebral atrophy with ventricular dilatation — the brain physically shrinks. Brain volume is a downstream consequence of nutritional adequacy. The populations with the lowest IQ scores are the populations with the highest rates of malnutrition, parasitic disease, and prenatal stress — all of which measurably reduce brain volume through documented mechanisms. The brain size differences the hereditarian attributes to genetics are substantially produced by the same environmental factors that produce the IQ differences. Brain size is not the cause. It is another consequence of the same deprivation.

Neuron count does not correlate with IQ. A stereological study of 50 male brains — physically counting neurons in post-mortem tissue rather than estimating from MRI volume — found that IQ does not correlate with the number of brain cells in the human neocortex and was only weakly correlated with brain weight. Numbers of glial cells, grey matter volume, white matter volume, cortical thickness, and surface area also showed near-zero nonsignificant correlations with IQ. The entire premise of the brain-size argument — more volume means more neurons means more intelligence — collapses at the most fundamental level of measurement.

The sex difference refutes the causal model. Men have 15% more cortical neurons and 13% greater total neuronal density than women. Men and women have approximately equal IQ. If neuron count determined cognitive capacity, men should dramatically outscore women. They do not. The sex difference in neuron count — 13–15% — is comparable to or larger than the racial differences Rushton claimed. And it produces no IQ gap. Einstein's brain was smaller than the average Rushton reported for Africans. The argument refutes itself.

Neural efficiency, not neural quantity, correlates with intelligence. Research using neurite orientation dispersion imaging found that the more intelligent a person, the fewer dendrites there are in their cerebral cortex. Intelligent brains possess lean, yet efficient neuronal connections — high mental performance at low neuronal activity. Separately, neurons from individuals with higher IQ show larger, more complex dendritic trees with faster action potential kinetics — they track synaptic inputs with higher temporal precision. What matters for intelligence is not how many neurons you have but how efficiently they are wired and how fast they fire. These are properties profoundly shaped by nutrition, stimulation, and developmental conditions — not fixed racial characteristics.

Cross-species comparison confirms this. The human brain has the largest number of cortical neurons — about 15 billion — despite being much smaller than the brains of whales and elephants, which have 10–12 billion or fewer cortical neurons. Whales have larger brains. They are not more intelligent. What distinguishes human intelligence is neuron packing density and axonal conduction velocity — a species-level adaptation shared by all human populations.

Hemispherectomy: the brain-size argument's terminal refutation. In a hemispherectomy, surgeons remove or completely disconnect an entire cerebral hemisphere — literally half the brain — to treat severe intractable epilepsy in children. The results: the average IQ after hemispherectomy is typically in the 70s, with many achieving normal IQ of 85 or higher. Most patients have minimal to no behavioural problems, satisfactory language skills, and good reading capability. Cognitive measures typically change little between surgery and follow-up. Adults who had the procedure as children score 86% accuracy on face and word recognition tests, compared to 96% for controls.

Rushton claimed that 97 cm³ of cranial volume difference — approximately 7% of total brain volume — explained the racial IQ gap. Hemispherectomy removes 50% of the brain. Not 7%. Fifty percent. And the children retain functional cognition. Many score higher than what the hereditarian claims is the genetic ceiling of Africans with whole brains. If 7% of volume explained a 15-point IQ gap, then removing 50% should produce catastrophic collapse. It does not. Children with half a brain go to school, read books, and recognise faces. The volume-intelligence relationship is so radically non-linear that the hereditarian's linear model — more brain equals more intelligence — is not merely imprecise. It is wrong.

Near-death experience research: consciousness persists when the channel flatlines. Parnia's AWARE-II study — the largest prospective study of consciousness during cardiac arrest, conducted across 25 hospitals — found that some patients whose brains showed electrical flatline on EEG monitors during cardiac arrest later recalled lucid, structured experiences from the period of clinical death. Brain activity consistent with consciousness reemerged in approximately 40% of monitored patients, sometimes up to 60 minutes into CPR — long after conventional medicine says the brain should be irreversibly damaged. Survivors described detailed, verifiable perceptions of their resuscitation environment — equipment used, words spoken, actions taken — confirmed by medical records and staff. These experiences were found to be distinct from hallucinations, delusions, illusions, dreams, or CPR-induced consciousness.

Paradoxical lucidity: cognition through a devastated brain. Patients with advanced Alzheimer's disease — massive neuronal loss, amyloid plaques, neurofibrillary tangles, brain volume reduced by 30% or more — sometimes suddenly and inexplicably regain full cognitive function shortly before death. They recognise family members they haven't recognised in years. They speak coherently. They recall memories the disease supposedly destroyed. The NIH has funded Parnia's lab to study this phenomenon. The brain is in ruins. The mind shines through. The channel is devastated. The source is undimmed.

Brain-Computer Interfaces: confirmation that the brain is a medium. If the brain generated cognition, a BCI would need to create cognition in silicon. It does not. Motor BCIs read electrical signals that the mind produces as it operates through the neural substrate, and route those signals to an external device. The BCI taps the signal at the transduction point. It reads the mail; it does not write it. Sensory BCIs work the reverse direction — converting external information into electrical signals delivered to the neural substrate, which the mind then interprets. Both directions confirm the medium model: the brain is the interface between the mind and the physical world. The signal exists independently of the specific channel it travels through, because you can reroute it and the same cognitive content arrives at a different output device.

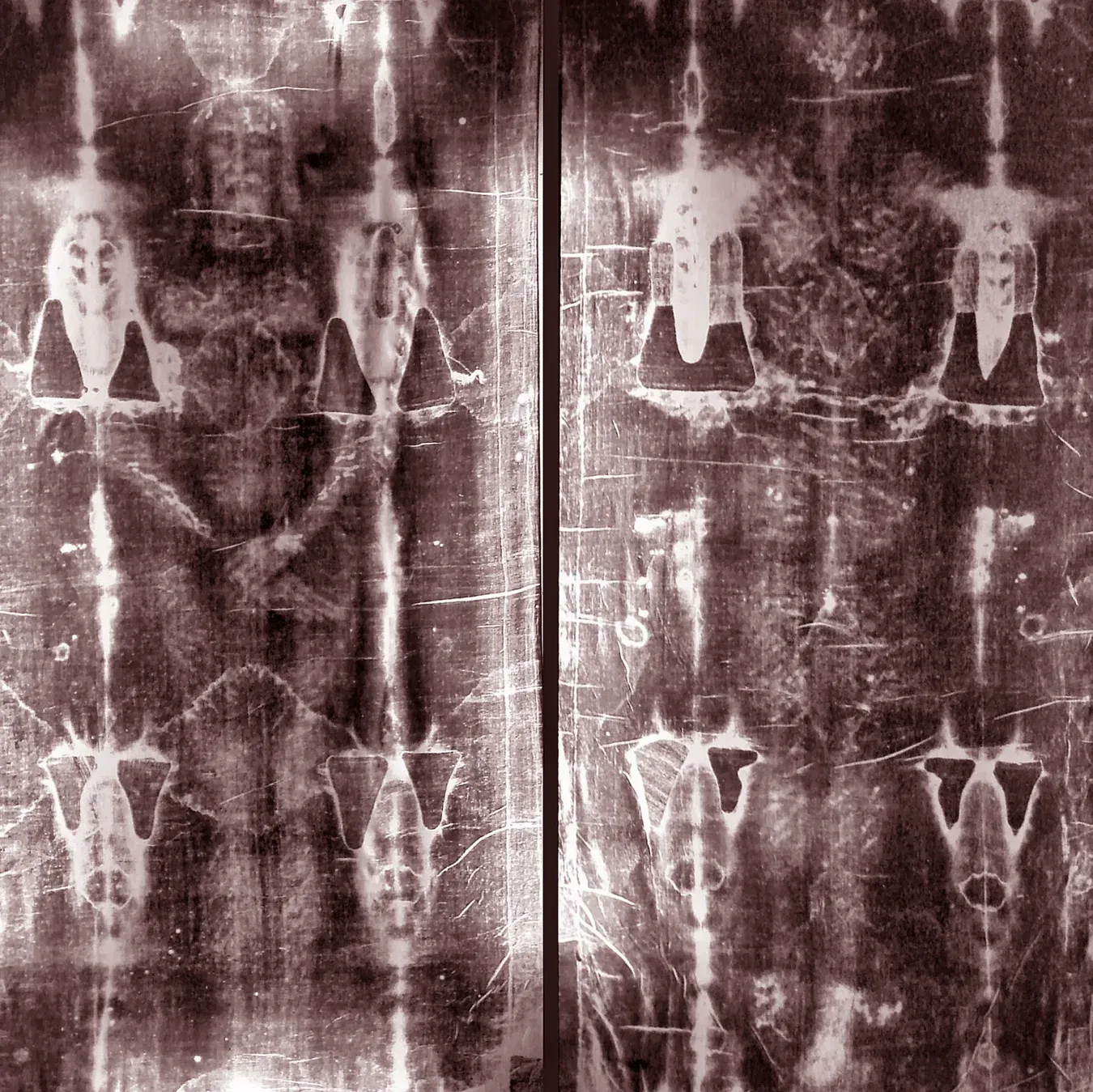

This is precisely why BCIs are a breach of the imago Dei. The incarnate design — the mind operating through biological substrate — is not accidental. It is architectural. Christ did not take a silicon body. He took a human body. The biological medium is the intended interface. A BCI that bypasses this interface treats the flesh as an obstacle rather than as the sacred channel through which the image is meant to operate. The resurrection model is not upload. It is transfiguration — consciousness perfecting its biological medium from within, not escaping it into something else. The Shroud, if genuine, bears the trace of this: the mind reasserting sovereignty over its substrate, not abandoning the substrate for a better one.

The energy cost of artificial intelligence: the miracle of sentience. The human brain operates on approximately 20 watts — less than a dim light bulb. On this budget it produces consciousness, self-awareness, moral reasoning, aesthetic experience, love, mathematical proof, musical composition, and the capacity to conceive of infinity while being finite. Training GPT-4 required an estimated 50 gigawatt-hours — roughly the annual electricity consumption of a small city. The result is a statistical approximation of one dimension of cognitive output — language — without consciousness, understanding, or meaning. The energy gap is too large to be explained by substrate efficiency differences alone. It suggests that what operates in biological cognition is not computation at scale but something qualitatively different — the mind's cognitive act, the finite image of the infinite Thinker, performing at creaturely scale an operation no amount of silicon can replicate because the operation is not computational. It is ontological. Every sentient being is running twenty watts of miracle. The IQ test measures the channel clarity of that miracle and calls the measurement intelligence.

The neuroscience chain is complete. Brain size is environmental. Neuron count is irrelevant. Neural efficiency, not quantity, correlates with intelligence. Hemispherectomy proves the volume-function relationship is radically non-linear. NDE research and paradoxical lucidity demonstrate that the mind persists when the channel dims or flatlines. BCIs confirm the brain is a medium, not a source. And the energy gap between biological cognition and artificial computation demonstrates that what operates through the brain is not reducible to information processing.

The mind cognises. The brain channels. When healthy, it channels clearly. When impaired, it dims. The light source does not change. The window does. And IQ tests measure the window.

VII. THE GENOMIC STUDY THAT DOESN'T EXIST

The hereditarian position rests not on genomic evidence but on the absence of genomic evidence — an absence maintained by the failure to conduct the studies that rigorous science requires.

The entire intelligence GWAS architecture was built on European-ancestry samples — primarily the UK Biobank, predominantly white British participants. The polygenic scores derived from these samples do not transfer cross-culturally. This is not a minor technical limitation. It is the central finding: the genetic architecture of intelligence, as currently understood, is population-specific in its statistical structure, which means it cannot be used to make between-population comparisons.

What would a serious African cognitive genomics study require? The answer mirrors the psychometric study design I described in Section I, because the genomic and phenotypic analyses must be integrated.

It would require separate discovery cohorts for West African, East African, Southern African, Central African, and deep-divergence populations (San, Pygmy groups, Hadza). Within each, it would need to account for sub-structure — Yoruba versus Igbo versus Hausa within West Africa, for instance — because the linkage disequilibrium patterns that GWAS depends on differ between these populations due to different demographic histories. Each cohort would need hundreds of thousands of individuals, given that each locus explains less than 0.02% of variance. The phenotypic data would need to be collected with culturally appropriate cognitive assessments — not simply Raven's Matrices translated into the local language — and matched with detailed environmental data at the same stratification: income, education, nutrition, disease burden, urban-rural gradient.

Cross-cohort replication would be essential to distinguish genuine causal variants from population-specific statistical artefacts driven by local LD patterns and environmental confounding. Only variants that replicate across genetically and environmentally diverse African populations could be considered candidate causal variants. And then — only then — could comparison with European results begin, requiring statistical methods that account for different LD, different population structure, different environmental confounders, and different phenotypic measurement properties. These methods do not currently exist at the required level of sophistication.

None of this has been done. Not one step.

What has been done instead is the genomic equivalent of Lynn's psychometric shortcut: Piffer, Murray, and others in the hereditarian ecosystem cross-reference European-derived variant frequencies in the 1000 Genomes Project's African populations and claim that Africans carry fewer "intelligence-enhancing" alleles. This is applying a European-calibrated instrument to a non-European population without recalibration — the same error the psychometric literature has documented for IQ tests, now replicated at the genomic level.

Africa's greater genetic diversity means it contains more variation at intelligence-associated loci, not less. Any variant that exists in European populations almost certainly exists in Africa — because Europeans are a subset of African diversity, descended from the bottleneck that reduced their variation. Africa should contain all the European variants plus additional variants that the bottleneck eliminated from non-African populations. The population genetics prediction is wider African cognitive potential, not narrower.

The honest statement of current knowledge is: no genetic evidence regarding cognitive capacity in African populations exists, because nobody has conducted the studies that would produce it. The hereditarian position survives in the gap between what has been studied and what has not. The gap is maintained by the failure to conduct the science the position's claims require.

Lynn didn't do the psychometric work. The genomicists haven't done the genomic work. The research programme that claims to measure intelligence cannot design a study.

VIII. ARCHAIC INTROGRESSION

Modern humans interbred with at least two archaic hominin species after leaving Africa: Neanderthals and Denisovans. The result is that non-African populations carry archaic DNA that sub-Saharan African populations largely lack. The hereditarian might seize on this — perhaps archaic DNA enhanced cognition in non-African populations. The genomic evidence demolishes the claim.

The most comprehensive study to date — published in Nature Communications in 2021 — found that genomic regions retaining detectable Neanderthal ancestry are depleted of heritability for all traits except those related to skin and hair. Cognitive traits are specifically depleted. Natural selection has been actively removing Neanderthal variants from brain-expressed genes in non-African populations for 50,000 years. The archaic DNA that distinguishes non-Africans from Africans has been selectively purged from precisely the regions that matter for cognition.

Where Neanderthal DNA has been retained in brain-related regions, the effects are not straightforwardly enhancing. Individuals with a higher proportion of Neanderthal-derived variants show increased functional connectivity between the intraparietal sulcus and visual processing regions, but decreased connectivity with regions involved in social cognition. The Neanderthal brain signature is: better visual-spatial processing, worse social cognition — the cognitive profile of a species that went extinct while modern humans, with their more social, more cooperative cognition, survived and spread across the globe.

The populations with the most archaic DNA — Melanesians and Aboriginal Australians, carrying 3–6% Denisovan ancestry — score lowest on IQ tests. The correlation runs in the opposite direction from the hereditarian prediction.

Meanwhile, Africans have their own archaic DNA. Durvasula and Sankararaman (2020) identified 2–19% archaic ancestry in West African populations from an unknown "ghost" lineage that diverged from the modern human/Neanderthal ancestor 360,000 to 1.02 million years ago. This introgression occurred after the Out-of-Africa migration, which is why non-Africans don't share it. Africa's archaic admixture is potentially larger in magnitude than the Neanderthal contribution to European genomes. The claim that "Africans have no archaic DNA" is empirically false.

The cognitive revolution — the emergence of behaviourally modern humans with symbolic capacity, complex language, art, music, and long-distance trade — occurred in Africa between 100,000 and 70,000 years ago. The Blombos Cave engravings, the ochre processing kits, the shell beads, the sophisticated stone tool technologies — all predate the Out-of-Africa migration and any contact with archaic hominins. The cognitive architecture that would produce every achievement of human civilisation evolved in Africa, in a purely modern human population. Every non-African population carries this African cognitive inheritance. The archaic admixture contributed environmental adaptations. The cognition came from Africa.

IX. DAVID REICH: THE SCIENCE AND THE COMMENTARY

David Reich is the most important figure in this debate who is not a partisan on either side — and both sides have tried to claim him. His actual position, parsed carefully, supports the environmental case far more than the hereditarian case, despite surface-level appearances.

Reich's lab has published 114 papers through a single NIH grant, covering every dimension of human population genetics. The findings relevant to the IQ debate are consistent and clear: all modern populations are recent mixtures, not stable evolutionary lineages. African populations contain more genetic diversity than the rest of the world combined. Archaic introgression contributed environmental adaptations, not cognitive enhancements. Population structure confounds genetic associations. No selection signals for differential cognitive evolution have been detected.

Zero papers in this corpus demonstrate genetic cognitive differences between human populations.

The gap between the science and the commentary is where the damage occurs. Reich's 2018 New York Times op-ed — "How Genetics Is Changing Our Understanding of 'Race'" — contained a passage that both sides have used as ammunition: "Since all traits influenced by genetics are expected to differ across populations (because the frequencies of genetic variations are rarely exactly the same across populations), the genetic influences on behavior and cognition will differ across populations, too."

This statement is technically true and wildly misleading. Yes, allele frequencies differ. Yes, if a trait is genetically influenced, the genetic contribution will differ slightly between populations. But "differ" does not specify direction, magnitude, or significance. The allele frequencies for eye colour differ too. That doesn't mean eye colour differences explain IQ differences. Reich's statement is vacuously true — and carries an enormous implied claim that his data does not support.

Sixty-seven scientists from across the natural sciences, social sciences, law, and humanities published an open letter in response. Their core objection was precise: Reich misrepresented the scholarly consensus he claimed to challenge. Nobody denies geographic genetic variation. The consensus is that this variation doesn't map onto socially defined racial categories in ways that explain cognitive differences — and Reich's own data, read without the op-ed's interpretive gloss, confirms the consensus rather than challenging it.

Reich's science is excellent. His interpretation overshoots his data. And the data, followed to its logical conclusion, tells us what every other line of evidence tells us: human populations differ genetically in ways that affect environmental adaptations, not in ways that have been shown to affect cognitive capacity.

X. THE STEELMAN AND ITS FAILURE

I want to construct the strongest possible version of the hereditarian argument — not a straw man but the genuine article, built with the best evidence available and the most charitable assumptions — and then evaluate it honestly.

The steelman runs: IQ tests measure something real and predictive. Intelligence is substantially heritable within populations. Allele frequencies differ between populations. The Black-White gap persists after controlling for socioeconomic status. Cold-climate environments may have imposed stronger selection for abstract reasoning. The Flynn Effect doesn't eliminate the possibility of a genetic component. The convergence of Black-White scores stalled. Immigrant success reflects selection effects.

Each premise, evaluated honestly:

IQ tests measure something real. Yes — but the positive manifold, while robust, captures performance in a specific cognitive mode. It is not the totality of intelligence, and it fails measurement invariance cross-culturally.

Heritability within populations is substantial. Yes — but the seeds-in-different-soil analogy is mathematically precise: within-group heritability is perfectly compatible with 100% environmental between-group differences. The 60–80% heritability figure applies to Western populations in relatively equal environments. It says nothing about populations living in radically different environments.

Allele frequencies differ. Yes — but "differ" is not "differ in a specific direction by a meaningful magnitude." The differences could be tiny, could favour African populations, could cancel out across thousands of loci. The premise establishes that some non-zero genetic difference is possible. It does not establish that the difference is large, directional, or meaningful.

The gap persists after SES controls. Yes — but "measured SES" is a crude proxy that captures income and education while leaving unmeasured everything else: intergenerational wealth (the median white family holds 8–10 times the wealth of the median Black family even at similar income), neighbourhood effects, environmental toxins, chronic stress, stereotype threat, epigenetic effects of intergenerational trauma. The "residual" is not a residual after controlling for all environmental differences. It is a residual after controlling for the variables we happened to measure.

Cold-climate selection. No genomic support. The populations that experienced the most extreme cold — Inuit, Sami, Yakut — do not score highest. Selection scans detect adaptations for skin colour, diet, and immunity, not cognition.

Flynn Effect compatibility. The hereditarian says environment and genetics could both contribute. But the Flynn Effect demonstrates that environmental factors can produce differences of exactly the magnitude the hereditarian attributes to genetics. The entire observed gap falls within the range of documented environmental effects. Parsimony prefers the single-cause explanation.

Convergence stalling. The stalling coincides precisely with the stalling of environmental convergence: resegregation, mass incarceration, the crack epidemic, widening wealth gaps. The environmental improvement stopped. The IQ convergence stopped at the same time. The "genetic floor" is indistinguishable from the environmental plateau.

Immigrant selection. Nigerian immigrants are selected. But the right tail that produces 64% bachelor's degrees is orders of magnitude too large for a population mean of 70. And second-generation Nigerian-Americans — not selected through immigration — outperform all other groups. The selection argument applies to the first generation. It does not apply to their children.

The steelman's fatal flaw is the gap between what the evidence permits — "a small genetic contribution is theoretically possible" — and what the argument claims — "a substantial genetic contribution exists." The first is a truism. The second is an empirical claim requiring positive evidence that decades of research have failed to produce.

The athletic specialisation analogy — the most sophisticated version of the argument — fails at every point of comparison. Distance running involves dozens of genes; intelligence involves thousands. Running was under geographically specific selection; intelligence was under universal selection. Athletics shows trade-offs (distance runners don't dominate sprinting); IQ shows no cross-over pattern (the same populations score highest on every subtest). Running times are cross-culturally comparable; IQ scores are not, as measurement invariance failures demonstrate. The analogy proves the opposite of what the hereditarian intends: even genuine genetic athletic advantages require massive environmental support to manifest, and the environmental differences between populations are more than sufficient to explain the observed cognitive differences without any genetic contribution.

XI. THE CULTURAL SELECTION ARGUMENT

The most intellectually honest version of the hereditarian-adjacent argument concerns cultural selection. In certain populations, cultural institutions may have systematically rewarded specific cognitive modes with reproductive advantage over many generations.

The Ashkenazi Jewish case is the strongest example. Cochran, Hardy, and Harpending (2006) argued that medieval occupational restriction (moneylending, tax farming, trade), combined with the cultural prestige of Talmudic learning as a marriage market advantage (the kest system), and an extreme population bottleneck (effective population of 300–500 individuals), could have produced selection for verbal-analytical cognition over approximately 40 generations. The mechanism is genetically plausible.

The Chinese imperial examination system (keju) operated for 1,300 years, creating direct links between examination performance and reproductive success for the small elite that passed.

The mechanism is real. The conclusion does not follow.

Every culture selects for cognition — the question is which cognition. The rabbi was the most sought-after marriage partner in Ashkenazi culture. The most politically astute counsellor was the most sought-after in a Lozi kingdom — the person who could navigate the Kuta's multi-party deliberative process, manage competing clan interests, adjudicate land disputes requiring generations of precedent held in memory. The most commercially astute trader was the most sought-after in a Yoruba trading community — the person who could calculate exchange rates across multiple currencies and manage long-distance trade networks without written records. African cultures selected for relational-political, commercial-mathematical, and ecological-spatial cognition. The IQ test measures the cognitive mode Ashkenazi and Chinese cultures happened to select for and finds these populations score highest. This is trivially true and scientifically empty. A test calibrated to relational-political reasoning would produce a different ranking.

The Ashkenazi case requires conditions so extreme — bottleneck of 300–500 individuals, centuries of occupational restriction, direct institutional pipeline from cognition to reproduction — that generalising from it to all population differences is unjustified. No sub-Saharan African population experienced a comparable bottleneck. African economies were diverse across multiple cognitive domains. The selection pressure was distributed rather than concentrated.

And the Chinese case refutes itself. Chinese IQ was in the mid-80s in the 1950s — after 1,300 years of the keju system. The gains to 105+ came from environment in 50 years. The selection mechanism operated for 1,300 years and didn't produce the gain. The environmental change produced it in 50.

The Ashkenazi case also demolishes the racial ontology from within. Ashkenazi Jews are genetically closer to Mizrahi Jews — Iraqi, Iranian, Yemenite Jewish communities — than to non-Jewish Europeans. The shared Levantine ancestral component persists in both populations. Yet the racial taxonomy classifies Ashkenazi as "white" and Mizrahi as "non-white." The same genetic population, split by geography, assigned to different races. White enough for biology, not white enough for Nazis or KKK. The category has no stable referent.

XII. THE MOTIVATION FOR SCIENTIFIC RACISM

Scientific racism persists because it serves four interlocking functions simultaneously.

Economic: It naturalises extraction. If African poverty is caused by African cognitive limitation, then the continued extraction of African resources by non-African powers is natural resource management rather than neo-colonial exploitation. My own Coordination Trap essay identifies how external actors benefit from African drift equilibrium. Scientific racism provides the intellectual infrastructure for that preference.

Psychological: It validates in-group superiority by converting emotional prejudice into apparent empirical fact. The person who feels superior to members of another racial group receives from scientific racism a validation that transforms feeling into fact.

Political: It provides scientific cover for specific political programmes — immigration restriction, welfare reduction, opposition to affirmative action. The Bell Curve was published in 1994 during the welfare reform debate. The policy conclusions preceded the scientific argument.

Epistemological: It makes one civilisation's cognitive mode the universal standard against which all others are measured, rendering all other cognitive traditions invisible or deficient.

These four functions reinforce each other. The economic benefit creates political interest. Political interest funds research. Research provides psychological validation. Psychological validation naturalises the epistemological framework. The framework justifies the economic arrangement. The loop is closed.

The Neoreactionary terminus reveals the programme's trajectory with unusual clarity. Embryo selection to "fix Africa" is the Atlantic Slave Trade's ontological innovation translated into 21st-century biotechnology. The logic is identical: African people are biologically deficient; the deficiency is genetic; the remedy is biological intervention on the African genome. The vocabulary has changed — from "civilising mission" to "polygenic optimisation." The ontological claim has not.

At its heart, racism is civilisationalism. The racist wants what they define as the ideal of their civilisation to persist and sees race mixing as problematic because it threatens assimilation. The anti-racist desires maximal diversity as a civilisational maxim. Both miss the point. Cultural assimilation is possible when the state itself provides sufficient universal cultural priority and pride — as being Roman was enough to be Roman, regardless of where you were born. Or, for the Christian, Christ is enough.

XIII. THE ONTOLOGICAL ORIGINS

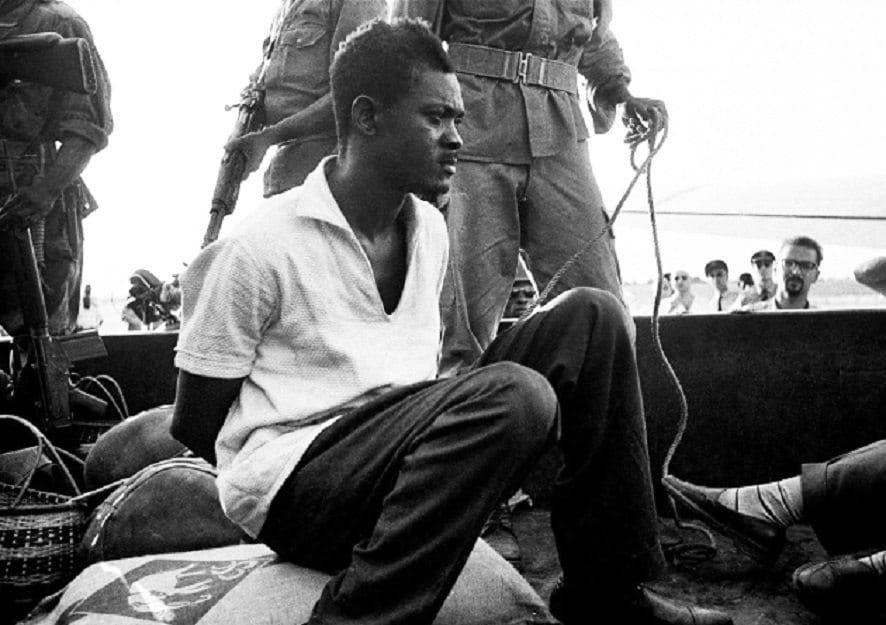

In Steve McQueen's 2013 film 12 Years a Slave, Solomon Northup — a free Black man, educated, literate, a violinist of professional calibre — is kidnapped and sold into slavery. His intelligence, his education, his cultural sophistication — none of it protects him. The ontological category overrides every individual parameter.

Solomon is not enslaved because he is cognitively inferior. He is demonstrably cognitively superior to many of the white people who own him. He is enslaved because the category — Black — overrides every individual quality. His literacy is a threat, not an asset. His intelligence must be concealed to survive. The system does not measure minds and rank them. It assigns a category and enforces it regardless of what the mind within the category can do.

There is a scene in which Solomon devises an engineering solution to transport lumber — a solution his white overseer cannot conceive. He is nearly killed for the presumption of demonstrating intelligence. The system does not reward African intelligence. It punishes it. And then it measures the suppressed output and calls it natural deficiency.

I open this section with Solomon because his story is the Atlantic Slave Trade's ontological innovation in a single life — and the innovation is what created the conditions in which the IQ debate became possible.

Slavery has existed in virtually every human civilisation. The practice is documented in Mesopotamia, Egypt, Greece, Rome, China, India, the Islamic world, pre-Columbian Americas, pre-colonial Africa, and medieval Europe. The ontological disorder — the failure to recognise invariant architecture in the person being enslaved — is universal. Every civilisation that ranked human beings by contingent properties operated from a disordered ontology. All share the same root: separation from Truth. The systematisation of the offence varies in degree and scope, but the origin is the same.

However, the form of the disorder determines the institutional legacy. And the Atlantic form was ontologically distinct from all prior systems.

Roman slavery was grounded in misfortune, not nature. A Roman slave was a person who had suffered a status transformation — through military defeat, debt, or birth. Greek slaves tutored Roman children; their intellectual superiority in specific domains was unremarkable. Manumission was structurally integrated. The freedman became a citizen. Septimius Severus — born in Libya — became emperor, and no one questioned his civilisational legitimacy. Roman ontology was civic: Romanitas was a participatory membership, not a biological essence.

Islamic slavery was grounded in religious status. The prejudice was real — Ibn Khaldun's descriptions of "Negroes" and the Arabic conflation of abd (slave) with blackness represent genuine anti-Black attitudes. But Ibn Battuta's own account reveals the inconsistency: he recorded that the Malians had "a greater abhorrence of injustice than any other people," that their property rights exceeded anything he had encountered, that their mosques were packed on Fridays — while simultaneously dismissing them as having "feeble intellect." His prejudice and his observations contradict each other on the same page. Islamic anti-Black prejudice existed in tension with Islamic theology, not as a consequence of it. A Muslim who despised Black people was violating his own faith's explicit teaching that no Arab has superiority over a non-Arab except through piety.

Indian caste occupies an intermediate position. The varna system used cosmological vocabulary — karma, dharma, ritual purity — but Reich's own population genetics work has established that the ANI/ASI ancestral gradient correlates with caste rank. Brahmins carry the most Ancestral North Indian (Steppe-derived) ancestry; Dalits carry the most Ancestral South Indian ancestry. The word varna means "colour." The system achieved racial stratification through cosmological vocabulary while the Atlantic system achieved it through scientific vocabulary. The practical effects on the people at the bottom are painfully similar.

Pre-colonial African slavery was real and damaging. The Ashanti held slaves. The Dahomey kingdom built its economy partly on slave-raiding. But African slavery was status-based, not essence-based. The enslaved person occupied a diminished social position that could change across generations. Children and grandchildren of enslaved persons were often integrated into the kinship group. The system was cruel but not ontologically sealed.

The Atlantic Slave Trade introduced something without precedent: the equation of enslavement with biological nature defined by race.

In every prior system, enslavement was a condition — something that happened to you. In the Atlantic system, enslavement became an identity — something that you were. The difference is fundamental. In Rome, a slave was a free person who had been enslaved. In the Atlantic system, an African was a slave by nature. Their blackness was the visible marker of an inherent, heritable, permanent condition. Manumission, where it existed, did not confer full humanity.

The innovation was necessary because of three features unique to the Atlantic system. Scale and duration — twelve to fifteen million people across four centuries required permanent justification. Christian context — the theology explicitly affirmed the unity of humanity, creating a contradiction that required biological resolution. And Enlightenment ideology — "all men are created equal" was proclaimed by slaveholders, and the contradiction between universal equality and racial slavery could only be resolved by redefining who counted as fully human.

The resolution was biological. Africans were reclassified as a biologically distinct category of being whose natural characteristics — lesser intelligence, greater physical endurance, childlike dependency — suited them for enslavement. Once this reclassification was accomplished, it became self-perpetuating. The theory creates the institutions. The institutions create the conditions. The conditions create the outcomes. The outcomes validate the theory.

Scientific racism is the intellectual apparatus for maintaining this loop after the legal institution of slavery has been abolished. IQ testing is its current instrument.

The danger of racial identity is that it is exclusionary by default. Racialised ontology always produces either racism or wokeness, because both require the category. The racist says the category determines cognitive capacity. The woke response says the category determines moral position. Both define persons by racial group membership. Neither dissolves the category. And as long as the category exists, it will be used to exclude — because exclusion is its function.

XIV. THE INSTITUTIONAL LEGACY

The ontological innovation did not end with abolition. The legal basis for slavery ended. The attitudes that made racial castes remained. And from those attitudes, an institutional legacy was constructed that traces a causal chain from the Atlantic Slave Trade directly to the IQ scores measured today.